Redesigning a visual from scratch is one of the most time-consuming parts of a creative workflow. Whether you’re a freelance designer iterating on a client’s brand assets, a content creator refreshing a visual series, or a hobbyist experimenting with digital art styles, the blank-canvas problem is real. You have a direction in mind, maybe a reference image, a mood board, or even a rough draft — but rebuilding everything from zero wastes hours that could go into refinement and storytelling.

That’s where modern AI-powered image transformation has quietly become one of the most useful capabilities in a practical editing toolkit.

What Image-to-Image AI Actually Does for Creators

Most people associate AI image generation with text prompts — type a description, get a picture. But the more interesting workflow shift happening right now is input-to-output transformation: feeding an existing image into an AI model and using it as the structural or stylistic anchor for a new result.

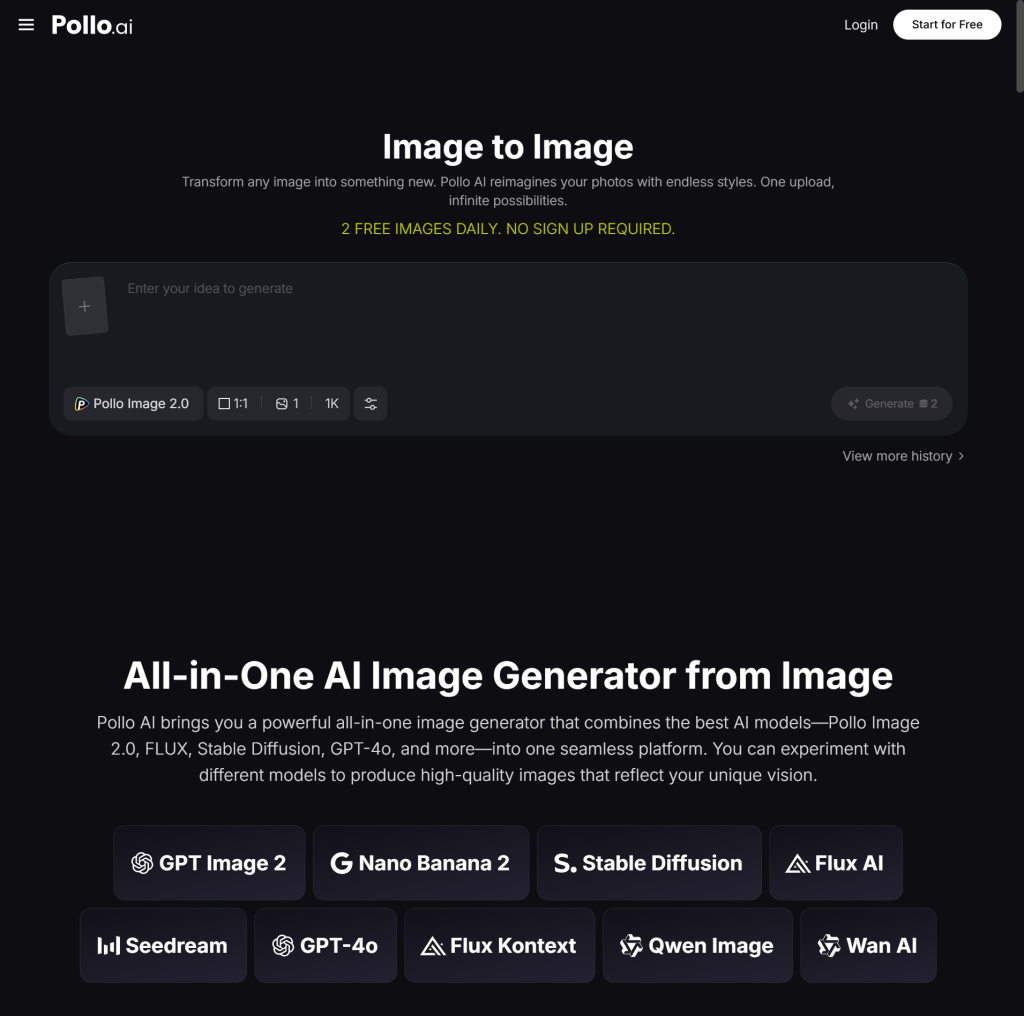

This is the core idea behind image to image AI, available through Pollo AI. Rather than generating visuals blindly from text alone, you provide a source image — a photograph, a rough sketch, a product shot, a storyboard frame — and the model uses it as a reference point to generate something transformed, stylized, or enhanced. The output inherits the structure, composition, or subject from your input while applying whatever style or improvement you’re after.

For designers, this means you’re not throwing away your existing work. You’re extending it.

A product photographer can take a clean studio shot and transform it into a lifestyle image with a different environment. A game artist can take a character sketch and see it rendered in three different visual styles before deciding on a direction. A social media manager can take a single hero image and spin off a set of variations with consistent subject framing but different aesthetic treatments.

Pollo AI’s approach here isn’t just technically capable — it’s built around iteration speed. You can run multiple passes, adjust influence weights, and compare outputs without leaving the tool.

Why This Workflow Is Spreading Fast Among Editors and Designers

The reason image transformation workflows are gaining ground among practical creators isn’t hype — it’s throughput.

Traditional editing workflows are linear. You start, you build, you refine, you export. AI-assisted transformation workflows are more like branches on a tree. You start with a solid input and branch into multiple visual directions simultaneously, then choose what to develop further. The time investment shifts from creation to curation, and most working creatives find that easier to manage under deadline pressure.

This also changes the skill floor. Creators who are strong on concept and direction but less practiced in technical execution can now produce polished visual drafts at a pace that wasn’t realistic before. The gap between “I know what I want this to look like” and “I have a usable asset” is closing.

What Pollo AI has built around this workflow is particularly useful for editors working across varied projects. The toolset isn’t designed around a single aesthetic or use case. It handles style transfer, structural transformation, image enhancement, and more — which means it fits into a broader range of project types rather than becoming a single-trick shortcut.

Using GoEnhance AI for Consistent Style Control at Scale

One of the more practical challenges in content production is maintaining visual consistency across a large set of assets. When you’re building out a campaign, a game, a portfolio series, or any visual body of work, you need your outputs to feel like they belong together — same quality level, similar aesthetic language, coherent color treatment.

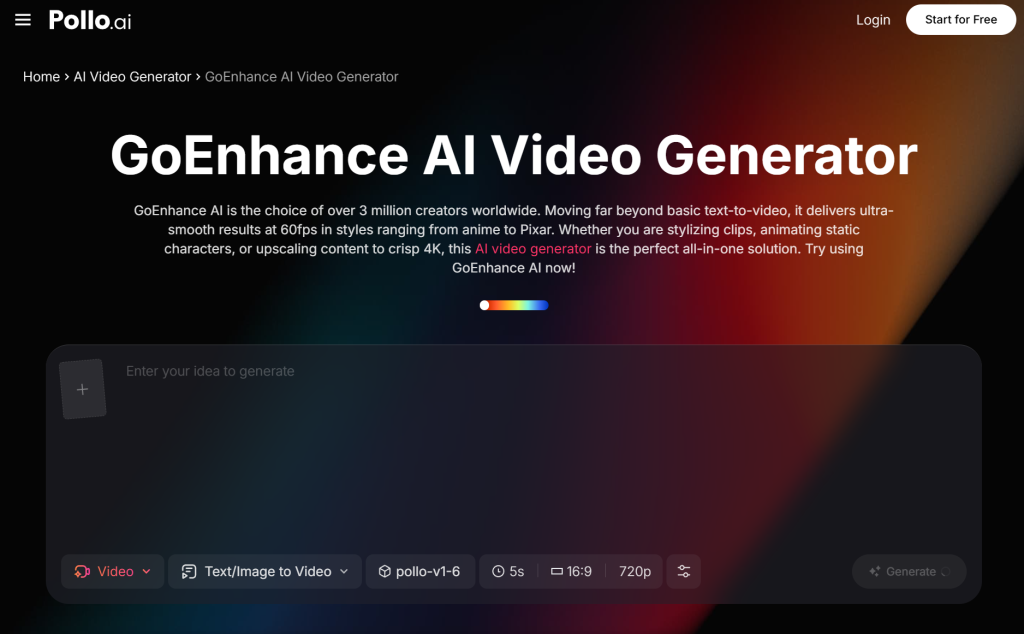

Pollo AI’s GoEnhance AI model was built with exactly this kind of task in mind. Rather than one-off image generation, it’s designed for workflows where consistency and quality ceiling both matter. It excels at applying stylistic enhancement and visual upgrades to existing assets in a way that scales — meaning you’re not just getting one good output, you’re getting a repeatable process that works across a batch.

For editors managing content libraries, this matters a lot. Upscaling and enhancing older assets to match current production standards is a common, tedious task. With a model purpose-built for quality and consistency, that kind of asset refresh becomes something you can actually run systematically rather than case-by-case.

It also plays well into team-based creative workflows. When multiple people are contributing to a visual project, having a shared enhancement pass run through a consistent model keeps the final output cohesive even when the inputs came from different hands or sources.

Building a Faster, More Flexible Visual Pipeline

The broader shift these tools represent is worth naming clearly: AI image transformation isn’t replacing creative judgment — it’s compressing the time between idea and artifact. Creators still decide what the goal looks like, what style fits the project, what’s worth pursuing. The models handle the heavy lifting of getting from intention to output.

For software and tool-focused readers who care about workflow efficiency, the practical value is straightforward. Fewer tools in your pipeline, faster iteration cycles, and more capacity to experiment without the time cost that usually makes experimentation feel risky.

Pollo AI sits squarely in this category of tools that reward regular use. The more you work with image-based inputs, the more you develop an instinct for what to feed it, how to frame your transformation goals, and where in your workflow AI-assisted generation saves the most time.

The best creative pipelines right now aren’t the ones built entirely on AI or entirely on manual craft — they’re the ones that use each where it makes the most sense. Image transformation sits exactly at that junction, and it’s one of the more immediately practical places to start.